系统与环境要求 Windows10系统 GTX1050Ti CUDA10.x VS2017 TensorRT7.0.0.11

01安装与配置 下载路径: https://developer.nvidia.com/TensorRT 首先需要下载TensorRT的ZIP格式文件到本地,然后解压缩到 D:\TensorRT-7.0.0.11

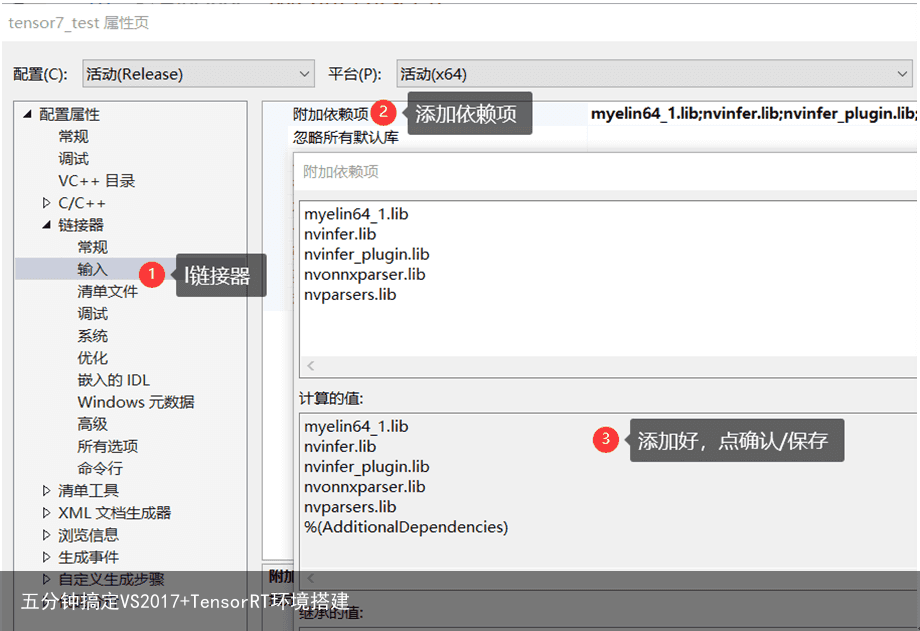

然后打开VS2017,新建一个空项目,分别配置

包含目录 D:\TensorRT-7.0.0.11\include 库目录 D:\TensorRT-7.0.0.11\lib 链接器 myelin64_1.lib

nvinfer.lib

nvinfer_plugin.lib

nvonnxparser.lib

nvparsers.lib

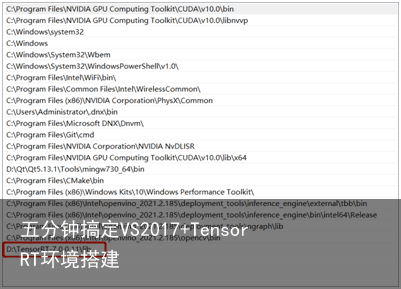

环境变量

D:\TensorRT-7.0.0.11\lib

然后在系统的环境变量中添加:

myelin64_1.lib

nvinfer.lib

nvinfer_plugin.lib

nvonnxparser.lib

nvparsers.lib

环境变量

D:\TensorRT-7.0.0.11\lib

然后在系统的环境变量中添加:

重启VS即可。

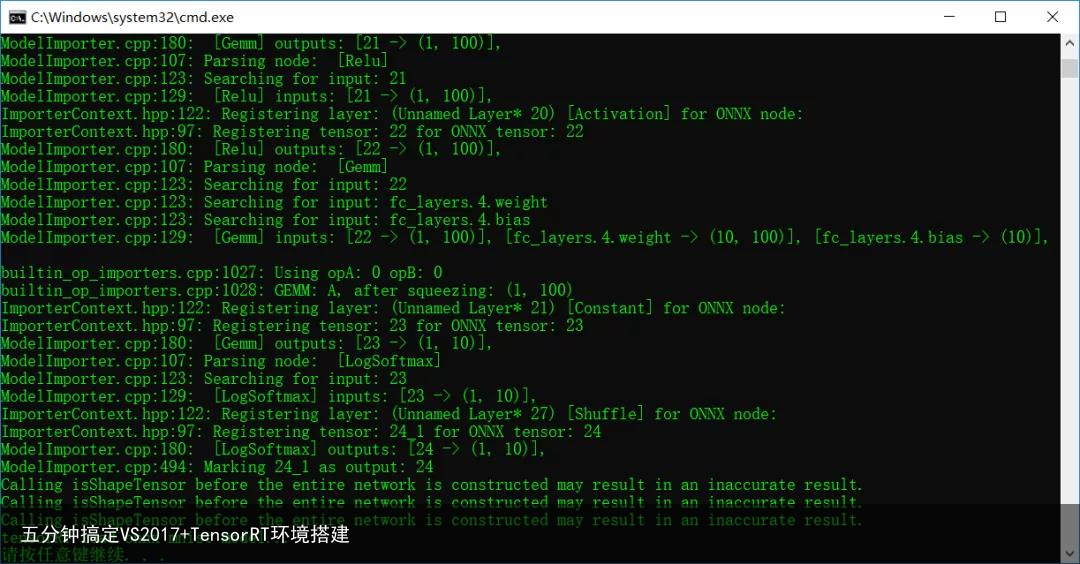

02代码验证与测试 2020年初,我写过的pytorch程序有个Hello Wrold的版本的模型就是mnist.onnx,我来测试一下是否可以通过TensorRT来实现对ONNX格式模型加载。重启VS2017之后在原来的空项目上然后添加一个cpp文件,把下面的代码copy到cpp文件中: include include include

include “NvInfer.h” include “NvOnnxParser.h”

using namespace nvinfer1; using namespace nvonnxparser;

class Logger : public ILogger { void log(Severity severity, const char* msg) override { // suppress info-level messages if (severity != Severity::kINFO) std::cout << msg << std::endl; } } gLogger;

int main(int argc, char* argv) {

std::string onnx_filename = “D:/python/pytorch_tutorial/cnn_mnist.onnx”;

IBuilder builder = createInferBuilder(gLogger);

nvinfer1::INetworkDefinition network = builder->createNetworkV2(1U << static_cast(NetworkDefinitionCreationFlag::kEXPLICIT_BATCH));

auto parser = nvonnxparser::createParser(network, gLogger);

parser->parseFromFile(onnx_filename.c_str(), 2);

for (int i = 0; i < parser->getNbErrors(); ++i)

{

std::cout << parser->getError(i)->desc() << std::endl;

}

printf(“tensorRT load onnx mnist model…\n”);

return 0;

}

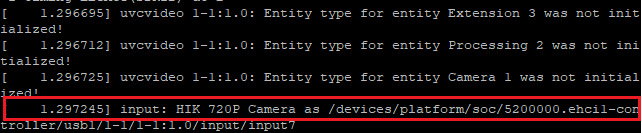

编译运行直接运行输出:

恭喜你!TensorRT在Windows10下开发环境配置成功了!绝对在5分钟内搞定,前提是先预装好前面说的那些依赖软件与相关的库!

恭喜你!TensorRT在Windows10下开发环境配置成功了!绝对在5分钟内搞定,前提是先预装好前面说的那些依赖软件与相关的库!